Solaris FireEngine Project

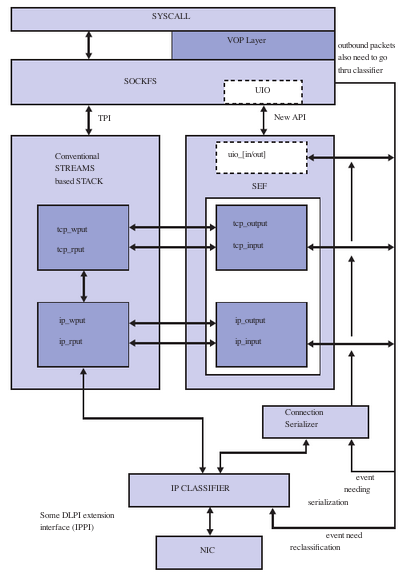

The Solaris FireEngine project is a high performance networking framework based on an IP classifier and vertical perimeters. The architecture is very different from the old BSD style stack. The FireEngine project binds a connection to a CPU ensuring that all packets for that connection are processed on the same CPU, taking full advantage of NUMA architecture. This improves the synchronization of available CPU data with the current 10-gigabits per second NICs (network interface controllers). See Figure 1-1 below.

The main principal of the Packet Event Function (PEF) is the concept of send and recv event lists. Event list is simply a series of function calls that get called for any packet. The idea is to provide a level of indirection to decide which functions get called for inbound and outbound packets instead of hard coding the function calls everywhere.

A block level diagram is shown in Figure 1-1. For an inbound TCP packet, IP knows that it needs to go to TCP. However IP does not know which TCP function to call. IP can call a common function (like tcp rput) or do some TCP processing to decide if its a SYN packet (and call tcp syn input) or a data packet (and call tcp data input). Things get even messier if IPSec is enabled and now IP needs to call ipsec policy check before passing the packet to TCP. Another method involves creating an initial event list for a type of connection and letting a module manipulate its own portion of event list. This list is unique per connection. So while processing the listen call, TCP can change its portion of recv event list to tcp syn input because that is what it is expecting. Once a SYN arrives and tcp syn input gets called to process the packet, it changes the TCP event to tcp data input because that is what is expected next. Similarly, if IPSec gets enabled on the system, it is straightforward to insert ipsec policycheck input() before tcp * input in the recv event list.

Because TCP has exclusive access to the connection and event list (it is behind a squeue), it can just reference the current edesc pointer and make the change. In other words, a packet coming in or going out should see a very specific code path with minimal check (you still have to make sure that packet is valid for the state you are in and if not, send it to tcp input which can be the general entry function).

Because of the amount of changes required and the potential impact, the FireEngine project is split into three phases:

- Phase I is where the fundamental infrastructure is put in place, and a large performance boost is obtained. This phase will be implemented in Solaris OS 10.

- In Phase II, the focus will be more on feature scalability, off loading issues, and the new event list based framework. This phase will be implemented in Solaris 10 OS updates.

- Phase III converts other modules to the new event list-based framework so that they can also benefit from it.

| Phase 1 | Phase 2 | Phase 3 (FireHose) | |

|---|---|---|---|

| Release | Solaris | Solaris 10 Updates/ Solaris Express | Solaris 11 |

| Features |

|

|

|

FireEngine Features

The FireEngine project includes the following features:

- Uses an IP Classifier approach to look up a connection

- Binds the connection to a particular CPU

- Processes the connection using a worker thread (uses serialization queue to allow one thread per connection)

- Drives the entire TCP state machine through the IP classifier

- Off-loads some IP classifier functionality (at least the connection tagging ability) to NIC

- Negotiates functional entry points to or from IP

- Makes NIC allocate all the memory needed for packet and metadata in one chunk

FireEngine Functions

Since NICs are capable of data transfer speeds in excess of 10 gigabits per second, the FireEngine project is designed to maximize the data transfer from the CPU to the NIC. This makes Solaris 10 OS networking capabilities as robust or better than the competition.